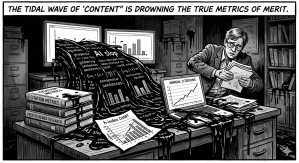

Currently, I hear many people, including myself, complaining about the AI-Generated References, Papers, and Reviews that are entering NLP and, to a lesser degree, Philosophy. But maybe all of the AI Slop that is currently flooding various scientific disciplines might actually serve as a force for good by catalyzing a basic reconception of how academic merit is measured and, correspondingly, resources are allocated.

The Background: Questionable Metrics to Measure Research Quality

The way academic merit has come to be measured in the past decades in most disciplines, including Natural Language Processing (NLP) and, to a lesser degree, Philosophy, is by quantitative metrics, in particular various citation counts such as the h-index or the i10-index – incidentally, it is also typically an important factor for university rankings. In theory, the indices were intended to track how relevant one’s research is for one’s peers. In practice, however, it creates a strong incentive for questionable behavior on the level of the individual academic: Always aim for the smallest publisheable unit, only publish in areas that receive attention from the herd, and never, never take a year off the treadmill to work on something truly original and disruptive: Because its poison for your citation scores, and because nobody will take the time to read and cite it if its truly original.

Still, one could say, the system had its advantages: The metrics have had some merit insofar as they allowed for comparison of relative research output between researchers in the same field, so they usually roughly correlated with achievement in a given discipline, and one could push them with hard and solid work. Peer-review functioned to filter out work that was too uninspired or methodologically unfit.

AI Slop Is Obliterating these Quantitative Metrics

However, AI-Supported academic paper writing is removing any previously existing rationality in these quantitative citation metrics right before our eyes.

Researchers subject to said incentive structures are using generative AI to game the metrics at unprecedented scales. For instance, at some point, I was searching for literature on AI Ethics in scientific research. One of the article that popped up had an interesting title, and the abstract promised just what I was looking for. I am an expert in the field, but it took me careful study of the paper to find that it was in fact not contributing anything of significance, just adding platitude after platitude.

I then checked the author of the paper and found that they published 14 papers. Just in 2025. Only single-authored pieces, not counting co-authored papers. And, of course, that researcher was busy citing their own research, boosting citation scores with each AI-Slop-Article. The research profile looks stellar, with an h-index of 24, and the paper I was studying has been published in leading journal in the field, owned by a major publisher.

For reviewers (who, incidentally, are working without pay for the big publishing companies), it becomes close to impossible to keep filtering the still existing solid research from the AI Slop. This means that non-articles such as the one mentioned get published (while potentially interesting research gets rejected, simply because the reviewers have too high a cognitive load to process them).

In sum, this simply means that the quantitative citation metrics lose any remaining credibility and rationale: Having an h-index of 24 and publications in top-tier journals does not entail that you have done any good research. Period. Thanks to AI, the deficiencies inherent in the system have magnified to a degree that one cannot overlook them anymore.

The Way Out: Actually Study Their Research

Perhaps somewhat ironically, this might bring people back to actually reading the research of their peers to judge the quality of their work.

It suggests that when committees make important hiring decisions, say, when evaluating different applications for a tenure-track-professorship, they should probably largely ignore quantitative metrics and focus on a small selection of research articles, perhaps suggested by the applicant themselves, and actually read and assess them.

On the level of incentive structures implemented by university managers and policymakers, this suggests to aggressively focus on quality instead of quantity. If a researcher does not gain anything by publishing 14 single-authored papers a year – no tenure, no premium, no respect – regardless of the actual quality of their work, then they will stop doing so and maybe start putting out fewer, but actually interesting and relevant research.

In this sense, AI might actually support research.

Leave a comment